USE CASE

THE CHALLENGE:

1.Outcome & Business Gaps

Traditional KPIs such as AHT, SLA, occupancy, and abandon rate were optimised. However, they did not fully reflect customer outcomes such as resolution quality, repeat contact behaviour, or cross-channel journey effectiveness.

As the centre evolved into an omnichannel environment — voice, chat, messaging, bots, email — reporting remained fragmented. Channels did not map cleanly to legacy metrics. Double counting occurred. Comparisons were unreliable.

Without stitched interaction data, First Contact Resolution became an estimate rather than a trusted measure. Root cause analysis relied on partial information.

Reporting was descriptive. It showed what happened — not why.

2.Data Consistency & Metric Definition Issues

A deeper technical challenge emerged: inconsistent metric calculation across platforms.

Different systems defined “answered,” “offered,” “abandoned,” and “transferred” differently. Transfers were sometimes double counted. Segments were treated inconsistently.

Finance, operations, and technology teams could all report different figures from the same underlying interactions.

Trust eroded. Internal disputes increased. Executive confidence declined.

3.Timeliness & Latency Constraints

Reporting operated across multiple horizons:

- Real-time wallboards

- Near real-time dashboards

- Intra-day reporting

- Historical and compliance reporting

API rate limits, asynchronous job endpoints, and retention windows introduced unavoidable latency. Some figures were eventually consistent — meaning KPIs could shift after initial reporting.

Operational decisions were being made on incomplete or partially updated data.

4.Mutating & Incomplete Records

Contact centre data is dynamic.

- Conversations include multiple segments and transfers.

- Wrap-up codes can be updated post-call.

- Tickets reopen.

- Quality scores are applied later.

Without architecture designed for mutability, systems either duplicated records or failed to reflect updates in historical reporting.

Trend analysis became unreliable. Engineering overhead increased.

5.Time Zone & Interval Complexity

Multi-region operations introduced additional complexity. Daylight savings adjustments, string-based time intervals, and local offsets materially impacted SLA and workforce calculations.

Small inconsistencies in time handling created measurable forecasting and compliance issues.

6.Integration Debt & Platform Constraints

Polling APIs with rate limits required bespoke adaptors and scheduled extraction logic. Over time, reporting pipelines became fragile, expensive, and difficult to scale when new channels or regions were introduced.

The centre needed more than dashboards.

It needed architectural stabilisation

Required Outcomes:

The organisation defined a clear operational objective:

Create a unified, consistent, and near real-time reporting layer that:

- Standardises metric definitions across channels

- Stitches interactions into complete customer journeys

- Handles late-arriving and mutable data correctly

- Separates operational real-time views from validated historical reporting

- Maintains consistency across regions and time zones

- Reduces bespoke integration debt

This was not simply a reporting enhancement. It was an operational resilience requirement.

How the emite Platform Helped

The emite Platform provided the structural foundation required to stabilise and accelerate decision velocity.

Unified Metric Standardisation

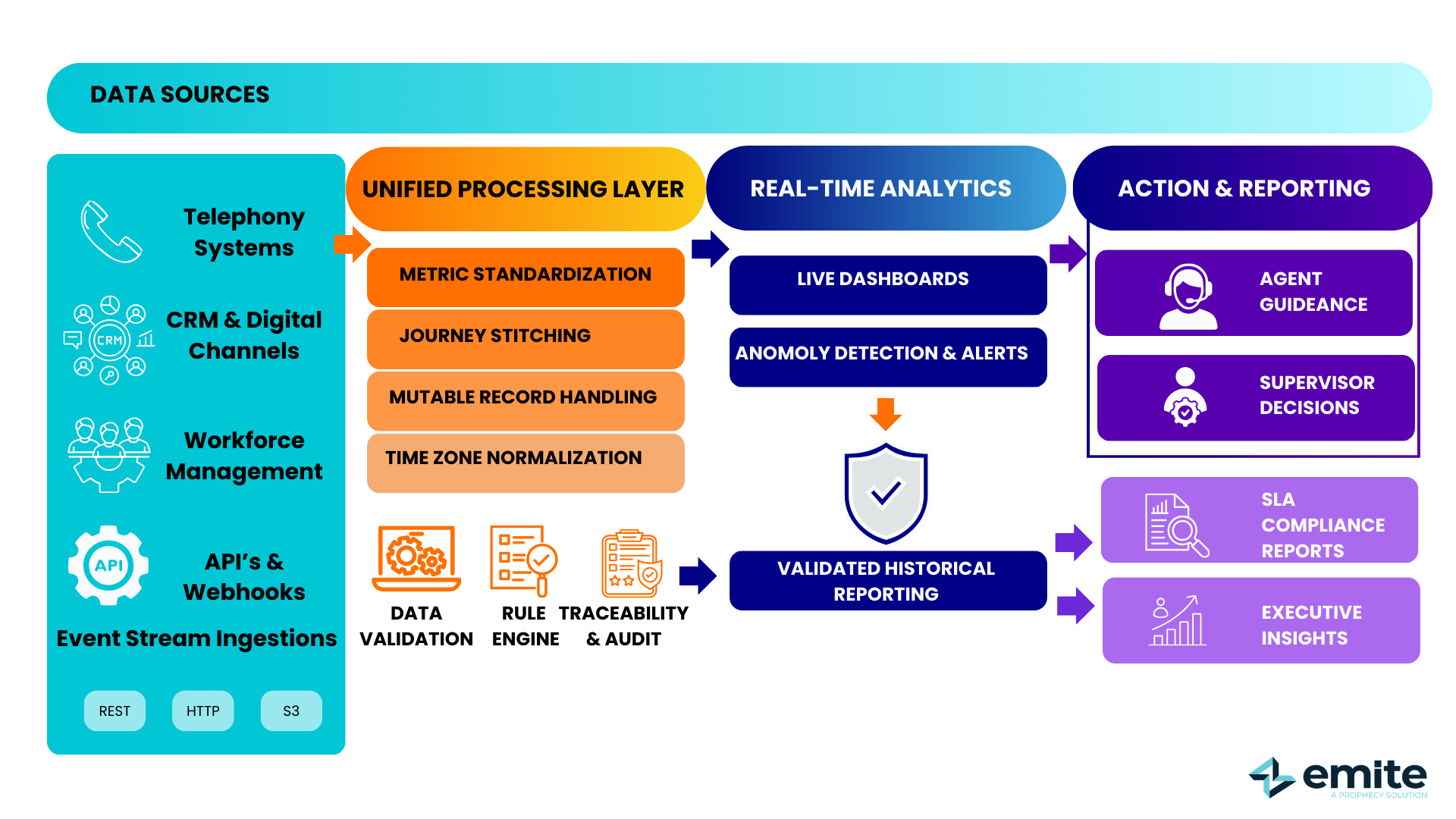

emite Advanced iPaaS retrieved operational data directly from REST APIs, HTTP endpoints, and S3-compatible sources, consolidating fragmented datasets across voice, digital, CRM, and workforce platforms.

Human-defined business rules were applied during processing to standardise metric definitions across channels — eliminating disputes over SLA, abandon, and transfer calculations.

Journey Stitching & Mutability Handling

Interaction segments were stitched into complete customer journeys, allowing reliable measurement of cross-channel resolution and repeat contact behaviour. Architecture accounted for late updates and mutable records without duplication.

Real-Time & Validated Reporting Separation

Operational dashboards reflected live conditions, while validated historical reporting remained consistent and auditable — preventing retrospective KPI shifts from undermining trust.

Reduced Integration Debt

Event-driven retrieval reduced reliance on brittle polling and custom extraction logic, lowering engineering overhead and improving scalability.

The contact centre shifted from fragmented reporting to governed, contextualised real-time insight.

Performance Impact Snapshot

Within six months, the organisation achieved:

- ↓30% reduction in metric reconciliation disputes

- ↑20% improvement in cross-channel performance visibility

- ↓25% faster anomaly detection

- ↑Increased executive trust in reporting accuracy

- ✓Reduced engineering maintenance overhead for reporting pipelines

Operational decisions were made with confidence, not debate.

Before:

Supervisors acted on delayed dashboards and retrospective reporting.

After:

Operational leaders responded to live, contextualised insights embedded directly into workflows — supported by governed, traceable data.

Executive Takeaway

When reporting is inconsistent or delayed:

- Operational decisions degrade

- Customer experience suffers

- Financial forecasting weakens

- Executive trust declines

When reporting is unified, governed, and near real-time:

- Performance optimisation becomes accurate

- Omnichannel insight becomes real

- Compliance reporting becomes reliable

- Workforce planning improves

- Decision velocity increases